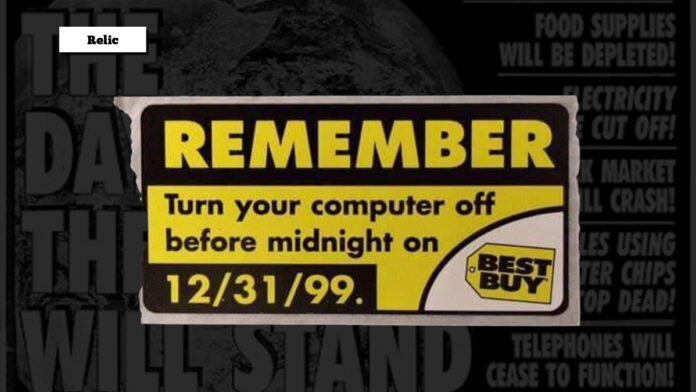

I have been with Gadgets for about half of its existence—a long time in terms of technological advances. Since then, smartphones and other gadgets have only gotten better. There were moments of uncertainty in the technology world and one of them just happened to be one that I bore witness to—the Y2K bug. Why was it such a big deal, and what came out of it?

Most reports centered around the Y2K bug came about because computers read dates as two digits—for example, January 1, 1999, read as 1/1/99, and thus the clock turning over to January 1, 2000, would cause confusion as computers would read it as not January 1, 2000, but rather, January 1, 1900, or 1/1/00. The reason dates only read two digits instead of four is because mainframe computers and personal computing memory were expensive. Some reports priced memory at USD10/KB to over USD100/KB in 1975 (USD59.10-USD591.03 in 2024 dollars), and in attempts to lower the prices and make them accessible to everyone, many manufacturers chose to use two digits for the date instead of four. The assumption for this was to expand the use of computers at the cost of allegedly rendering them useless by the year 2000.

The earliest reports of the Y2K bug began as far back as 1958, when Bob Bemer noticed that this would be an issue when he was working with genealogical software. He attempted to bring this issue up with various stakeholders ranging from fellow programmers to IBM to the United States federal government and even international organizations such as the International Organization for Standardization for 20 years, recommending the COBOL picture clause should be used to specify four-digit years for dates.

The first time that this issue was addressed online was as early as 1985 on a Usenet newsgroup. Spencer Bolles said, “I have a friend that raised an interesting question that I immediately tried to prove wrong. He is a programmer and has this notion that when we reach the year 2000, computers will not accept the new date. Will the computers assume that it is 1900, or will it even cause a problem?” His questions began to gain traction in the 1990s, when Computerworld ran an article by Peter de Jager about “Doomsday 2000,” and other programmers, such as David Eddy, coined the term Y2K in 1995.

The problem even gained the attention of the United States Department of Defense. “The Y2K problem is the electronic equivalent of the El Nino and there will be nasty surprises around the globe,” said former United States Deputy Secretary of Defense John Hamre. The brokerage industry made moves to address this in the 1980s, namely because bonds had maturity dates beyond the year 2000. The New York Stock Exchange (NYSE) hired 100 programmers to address these issues, spending USD20 million on the Y2K issue.

Date programming had to be changed as well; credit card systems saw bugs as early as 1997, with processing issues happening with cards that had expiration dates beyond the year 2000, and a lawsuit was even filed over this issue. Microsoft Excel, the C programming language, JavaScript, UNIX, and the Windows 3.x file manager had to make adjustments, not to mention a slew of other programs, applications, and more. Some solutions, such as date expansion to four digits, date windowing, date compression, and more, were also proposed for legacy systems.

What happened on January 1, 2000, itself? Anything that used a computer was affected, but the extent varied. Reports from various countries included minor glitches such as deleting new text messages to nuclear plant radiation monitoring systems failing in Japan, a video store incorrectly assessing a USD92,150 late fee due to a tape being 100 years overdue in the United States, 30,000 cash registers printing receipts with 1900 dates in Greece, and more. It is estimated that the cost of the work done to prepare for Y2K was more than USD300 billion, although the final cost may be unknown due to the variation of errors and the severity of such errors. A recent software failure by CrowdStrike in 2024 also brought down computers worldwide and was also compared to the Y2K bug.

After January 1, 2000, came and went with much fanfare and (relatively) little effect on technology, people got back to their lives with ever-increasing importance on gadgets and technology. While there are many issues that technology still has to address to this very day, people are now more proactive than ever with events similar to Y2K. In fact, I myself learned about issues involving the years 2010 and 2022, which went virtually unnoticed compared to Y2K. While we continue to rely on technology, we have to be proactive about solving issues created by technology as well, and the Y2K bug was one of the examples where prevention helped, even though the impact was not as big as people expected it to be.

Words by Jose Alvarez

Also published in GADGETS Magazine September 2024 Issue.